With ever more complex software and hardware in our embedded systems, it may seem that worst-case execution time analysis is something that would be left until the very end of the software lifecycle. However, finding out about temporal problems at such a late stage is usually problematic, not only because of how much commitment has been made to a particular design, but also because the changes needed to get software to meet an execution time requirement are often much bigger than the equivalent changes to meet a functional requirement.

Ideally, you should aim for much earlier investigation of the execution time behaviour. For example:

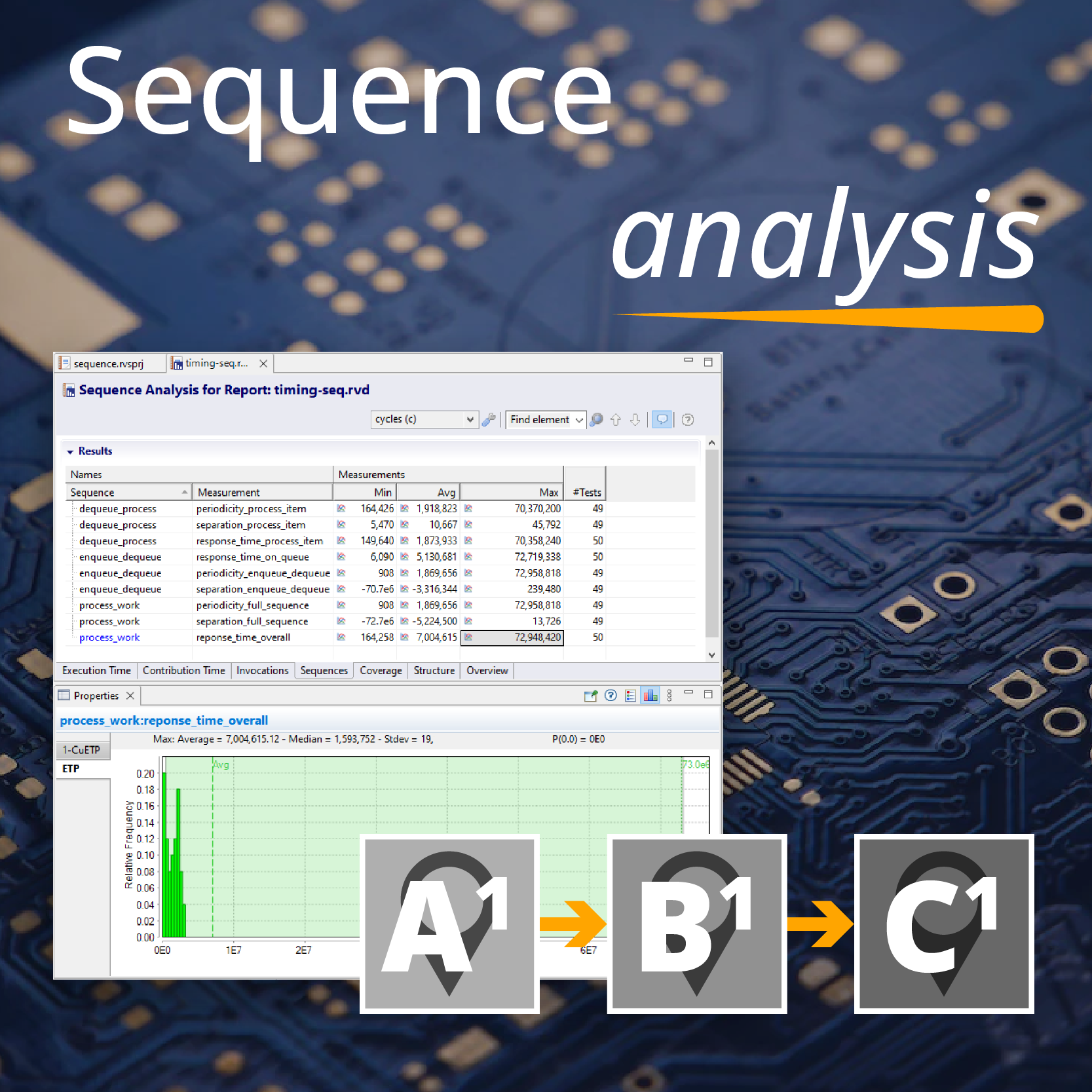

- with host-based execution, you can look at relative execution times of different parts of the software, how execution time scales in practice with the input data, and whether the same code called from different locations has vastly different execution times;

- with a modest simulator, even with only part of the software, you can look at the impact of the RTOS and application on one another. Are scheduling priorities being observed? Are tasks being released when expected? Could tasks be spread out to better even the worst-case load? The timing information also gives a good vehicle for talking about the software execution to systems engineers;

- with development hardware, you can look at the detailed schedulability, including the impact of hardware facilities on the software behaviour. Cache has a big impact, as do access latencies for different hardware devices, but different platforms all seem to have unexpected behaviours that show up clearly in the timing data.

These are just a few cases; in practice there is a continuum of different options for execution time analysis deployment, and the earlier it is introduced, the quicker you can start to control your timing uncertainty.

Rapita System Announces New Distribution Partnership with COONTEC

Rapita System Announces New Distribution Partnership with COONTEC

Rapita partners with Asterios Technologies to deliver solutions in multicore certification

Rapita partners with Asterios Technologies to deliver solutions in multicore certification

SAIF Autonomy to use RVS to verify their groundbreaking AI platform

SAIF Autonomy to use RVS to verify their groundbreaking AI platform

RVS gets a new timing analysis engine

RVS gets a new timing analysis engine

How to measure stack usage through stack painting with RapiTest

How to measure stack usage through stack painting with RapiTest

What does AMACC Rev B mean for multicore certification?

What does AMACC Rev B mean for multicore certification?

How emulation can reduce avionics verification costs: Sim68020

How emulation can reduce avionics verification costs: Sim68020

How to achieve multicore DO-178C certification with Rapita Systems

How to achieve multicore DO-178C certification with Rapita Systems

How to achieve DO-178C certification with Rapita Systems

How to achieve DO-178C certification with Rapita Systems

Certifying Unmanned Aircraft Systems

Certifying Unmanned Aircraft Systems

DO-278A Guidance: Introduction to RTCA DO-278 approval

DO-278A Guidance: Introduction to RTCA DO-278 approval

The EVTOL Show

The EVTOL Show

NXP's MultiCore for Avionics (MCFA) Conference

NXP's MultiCore for Avionics (MCFA) Conference

Embedded World 2026

Embedded World 2026

XPONENTIAL 2026

XPONENTIAL 2026