Modified condition/decision coverage (MC/DC) testing requires testing, among other things, that each condition in a decision takes every possible outcome, and independently affects the outcome of the decision.

Coverage analysis tools test for MC/DC by observing results when the value of each condition is changed independently from the other conditions in the decision (though, with some clever logic, masking MC/DC can reduce overheads in some cases).

Most coverage analysis tools support what they deem to be a "reasonable" number of conditions per decision (our customers report that limits of 17 to 20 conditions per decision are common). But what if one of your decisions exceeds this arbitrary limit? You could:

- Change your code (but why should you?)

- Perform MC/DC analysis by hand (how many conditions? 50? good luck!)

RapiCover, however, is not like most coverage analysis tools - supporting up to 1000 conditions per decision.

Q&A:

Why do you support so many conditions per decision?

Because we can, and because your code may include giant decisions (we aren't judging). In fact, when NASA analyzed 5 different Line Replacement Units (LRU) from 5 different airborne systems they found 2 decisions with more than 36 conditions (table below). Source: https://shemesh.larc.nasa.gov/fm/papers/Hayhurst-2001-tm210876-MCDC.pdf

Surely, nobody would write a decision that big, right?

While you're unlikely to write something like this by hand, if you're using model driven development (MDD), your automatically generated code may include decisions with a large number of conditions (for example code for aggregating large numbers of boolean signals).

Why only 1000 conditions?

We can support more than 1000 if you really need it! (but we'd be curious to see the code that requires this).

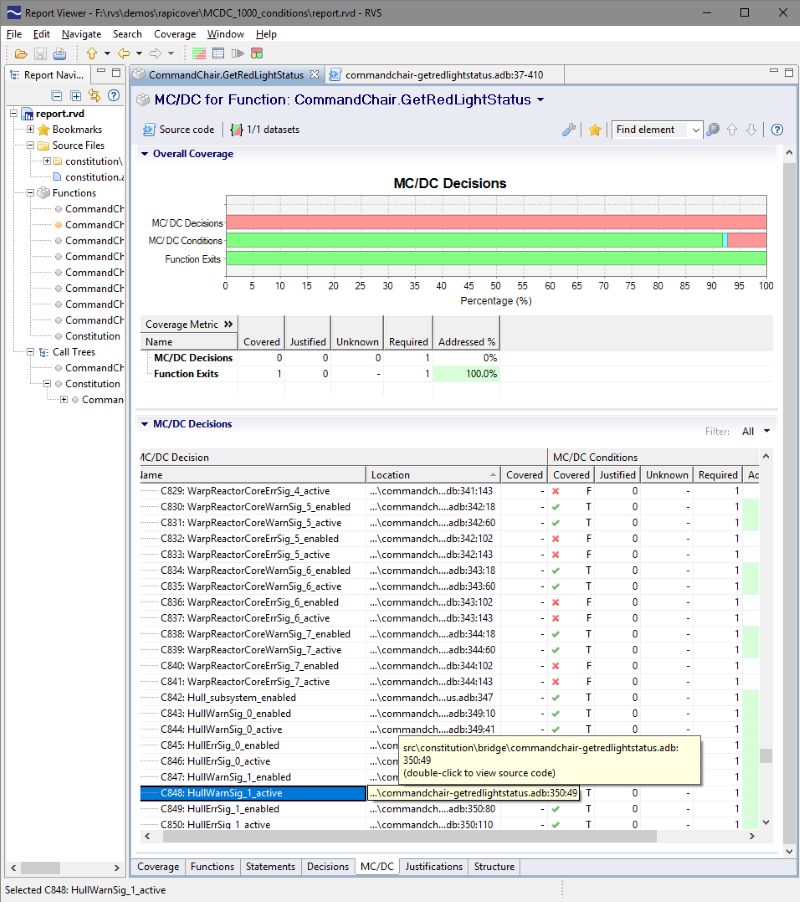

What does a RapiCover report with such large decisions look like?

I'm glad you asked. See Figure 1 below. Note that you can scroll to view the full file in the source code view.

Figure 1. RapiCover report from a decision with almost 1000 conditions

The example in Figure 1 shows a function that aggregates hundreds of boolean signals (just short of 1000) into a "warning light" signal. This is a bit like the piece of software that lights up the engine light on your dashboard. There are many error signals that can cause this light to come on and they can be all listed in a large or boolean expression making a very large MC/DC decision.

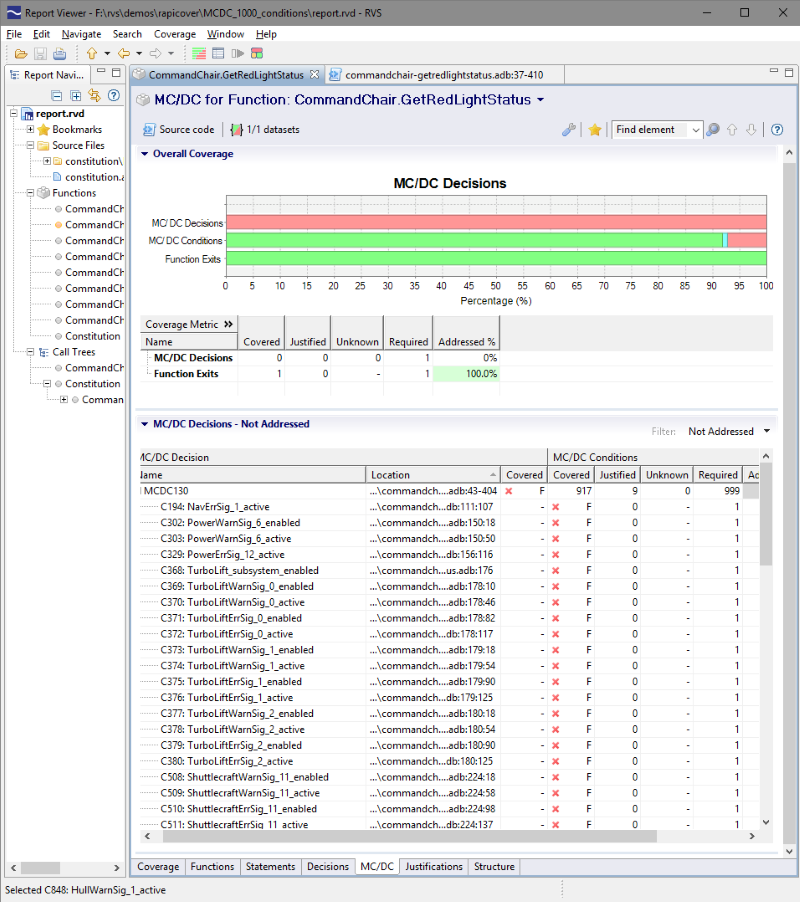

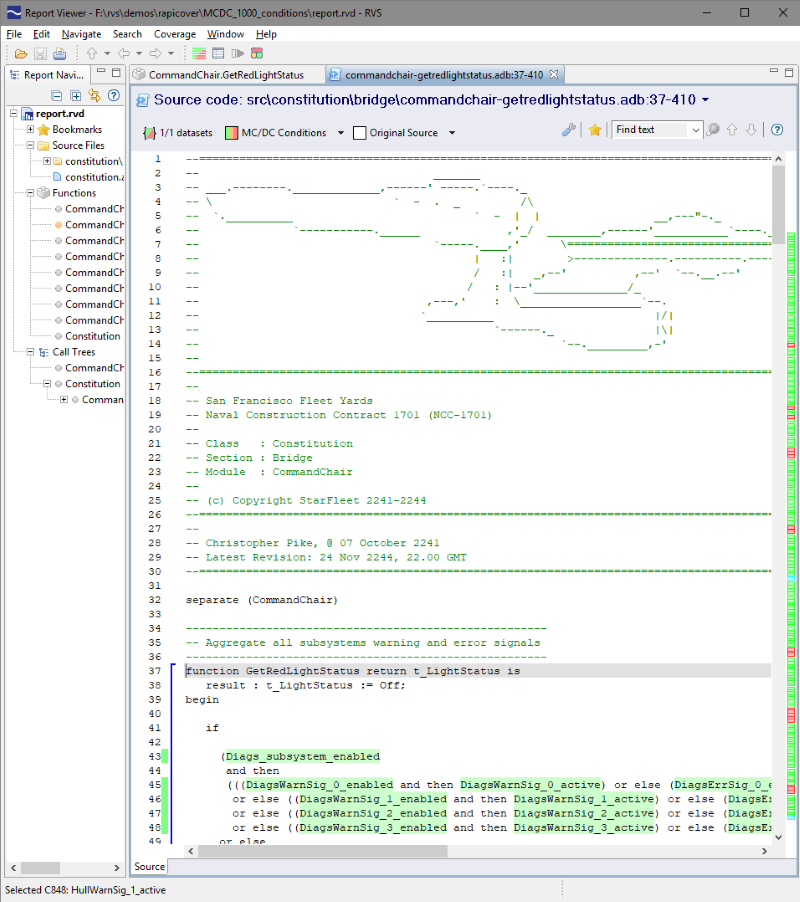

The example report above shows the RapiCover MC/DC view with the coverage status of close to 1000 conditions in both the table view and the colorized source code view.

In the source code view, you can see a few coverage holes. For example, while various subsystems are missing a few tests to cover all their signals, the Turbolift subsystem has not been tested at all, and all of the GravityGenerator signals have been addressed using justifications rather than tested.

As a final note, it is interesting to see that Ada is still a popular choice for Aerospace software development in the 23rd century - as shown by this example.

RVS 3.24 accelerates multicore software verification

RVS 3.24 accelerates multicore software verification

Rapita Systems and Avionyx Announce Strategic Partnership to Offer Best-in-class Avionics Solutions

Rapita Systems and Avionyx Announce Strategic Partnership to Offer Best-in-class Avionics Solutions

Rapita System Announces New Distribution Partnership with COONTEC

Rapita System Announces New Distribution Partnership with COONTEC

RVS gets a new timing analysis engine

RVS gets a new timing analysis engine

How to measure stack usage through stack painting with RapiTest

How to measure stack usage through stack painting with RapiTest

What does AMACC Rev B mean for multicore certification?

What does AMACC Rev B mean for multicore certification?

How emulation can reduce avionics verification costs: Sim68020

How emulation can reduce avionics verification costs: Sim68020

How to achieve multicore DO-178C certification with Rapita Systems

How to achieve multicore DO-178C certification with Rapita Systems

How to achieve DO-178C certification with Rapita Systems

How to achieve DO-178C certification with Rapita Systems

Certifying Unmanned Aircraft Systems

Certifying Unmanned Aircraft Systems

DO-278A Guidance: Introduction to RTCA DO-278 approval

DO-278A Guidance: Introduction to RTCA DO-278 approval

Test what you fly - Real code, Real Conditions Webinar

Test what you fly - Real code, Real Conditions Webinar

Avionics Certification Q&A: CERT TALK

Avionics Certification Q&A: CERT TALK

XPONENTIAL 2026

XPONENTIAL 2026

DO-178C Multicore In-person Training (Heathrow)

DO-178C Multicore In-person Training (Heathrow)