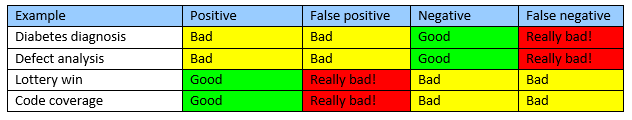

‘False positive’ and ‘false negative’ are terms commonly heard in software verification. But depending on whether you are looking at static or dynamic analysis, the level of seriousness each conveys can differ.

But what do these terms mean? The terms positive and negative relate to the result of a hypothesis or assertion – a positive result means the hypothesis was true and a negative result means it was false.

This could be good or bad depending on how you view the result. In a test for diabetes you would be hoping the result to come back negative. At this point a positive result is bad news – but a false negative is arguably worse. You now think you do not have diabetes, but you actually do, and therefore may not be aware that you need medication. In this case, a false negative can be quite dangerous.

But this is not always the case. Take winning the lottery – a positive result is good – you have won! But a false positive may mean that you start spending money you don’t have, quit your job and buy a house you can’t afford. In this case, a false positive can be seen to be pretty dangerous too!

So how are these terms used in software verification? Let’s look at two examples that are commonly used: static (defects) analysis, and dynamic analysis (code coverage).

Static (defects) analysis

If you consider static analysis tools that attempt to find defects in the code, these tools tend to work by asserting that there is a problem. In this case, a positive result is bad news – you have a defect. But at least you know, so can fix it. A false negative is bad – you have a defect, but the tools have failed to spot it.

Dynamic analysis (code coverage)

Flip this around for dynamic verification, and take code coverage as an example. In this case, you are asserting that you have exercised a part of the software. In this case, a positive result is good news – you have achieved coverage – and it's a false positive that you need to look out for, as this would indicate that you have not covered a piece of code but you think you have, and you may take credit for it.

If you are still confused, you are not alone – this has been the cause of many a mix up! Remember – false diagnoses are never a good thing, but if a positive is bad, a false negative is worse. If a positive is good, a false positive is worse!

Here at Rapita, we put a lot of effort into assuring that our tools don't produce false positives or negatives through our qualification work. When we do identify defects in our tools that can produce these, we make sure that you know about them.

RVS 3.24 accelerates multicore software verification

RVS 3.24 accelerates multicore software verification

Rapita Systems and Avionyx Announce Strategic Partnership to Offer Best-in-class Avionics Solutions

Rapita Systems and Avionyx Announce Strategic Partnership to Offer Best-in-class Avionics Solutions

Rapita System Announces New Distribution Partnership with COONTEC

Rapita System Announces New Distribution Partnership with COONTEC

Retro gaming with the Sim68020

Retro gaming with the Sim68020

RVS gets a new timing analysis engine

RVS gets a new timing analysis engine

How to measure stack usage through stack painting with RapiTest

How to measure stack usage through stack painting with RapiTest

What does AMACC Rev B mean for multicore certification?

What does AMACC Rev B mean for multicore certification?

How to achieve multicore DO-178C certification with Rapita Systems

How to achieve multicore DO-178C certification with Rapita Systems

How to achieve DO-178C certification with Rapita Systems

How to achieve DO-178C certification with Rapita Systems

Certifying Unmanned Aircraft Systems

Certifying Unmanned Aircraft Systems

DO-278A Guidance: Introduction to RTCA DO-278 approval

DO-278A Guidance: Introduction to RTCA DO-278 approval

Avionics Certification Q&A: CERT TALK

Avionics Certification Q&A: CERT TALK

XPONENTIAL 2026

XPONENTIAL 2026

DO-178C Multicore In-person Training (Heathrow)

DO-178C Multicore In-person Training (Heathrow)

Avionics and Testing Innovations

Avionics and Testing Innovations